The "10x engineer" is a dying myth. In its place, something more interesting is emerging: the harness engineer.

Software has always been a ladder of abstractions. Assembly gave way to C. C gave way to managed languages. Managed languages gave way to frameworks and cloud services. Each step felt like a crisis at the time. Each turned out to be inevitable. We just hit the next rung: we no longer just write code. We orchestrate systems that write it for us.

But the industry is obsessed with the wrong thing. The breakthrough isn't the model. It's the harness.

The three eras of AI integration

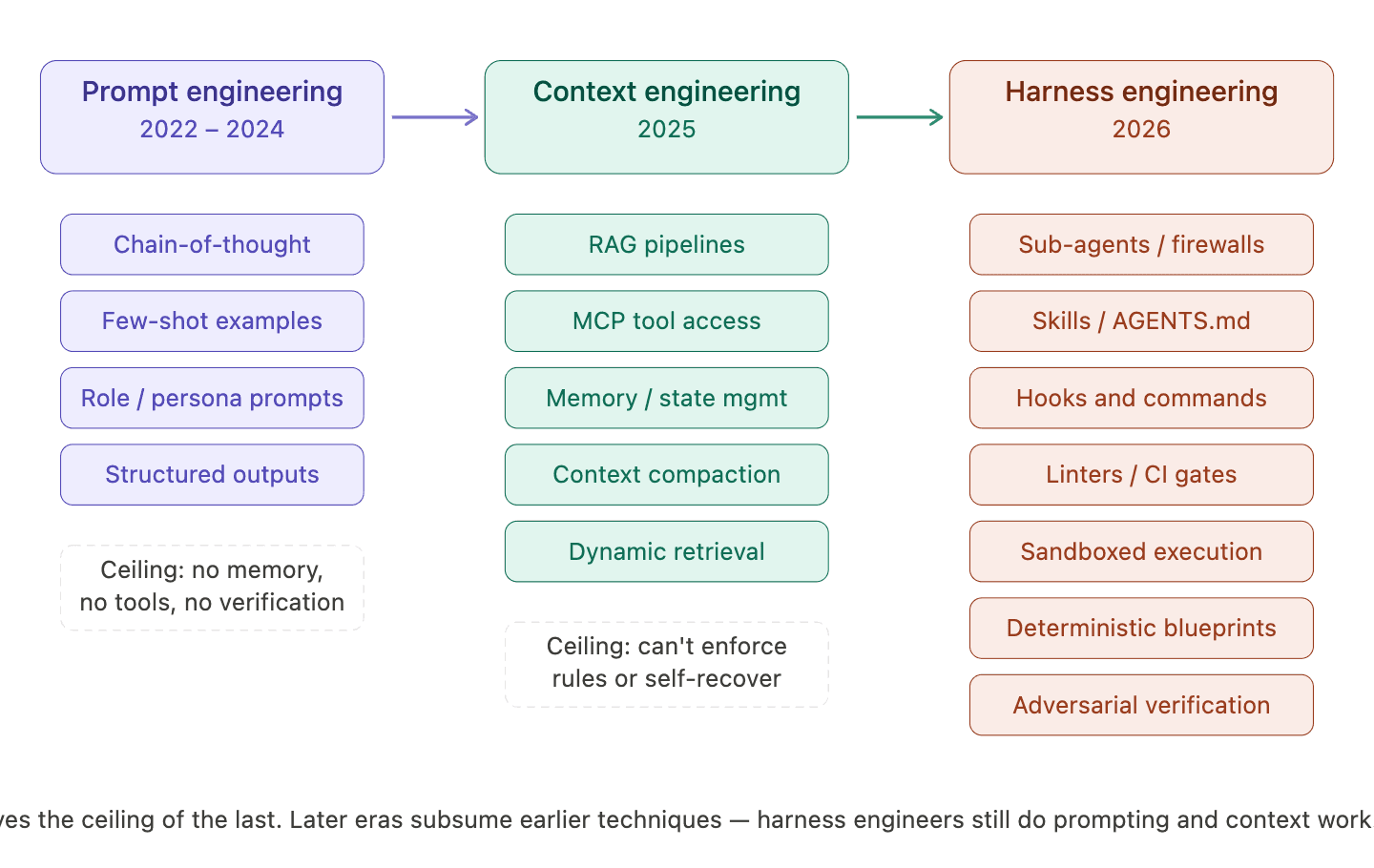

If 2024 was about the prompt and 2025 was about context, 2026 is the year of the harness. Each era hit a ceiling that only the next could solve.

Prompt engineering (2022–2024) was the art of the single instruction. We obsessed over chain-of-thought reasoning and it worked surprisingly well for simple tasks. But no matter how clever the prompt, the model had no memory between sessions, no access to external tools, and no way to verify its own output. You could ask it to write a function, but you couldn't ask it to build and test a feature across a real codebase.

Context engineering (2025) was the realization that instructions alone aren't enough. The model needs working memory. Andrej Karpathy defined this as the discipline of filling the context window with the right information at each step, pulling in relevant files via RAG, connecting tools through MCP, managing conversation history so the model doesn't lose track of what it's doing. The ceiling: even with perfect information, models still couldn't enforce architectural rules, recover from their own mistakes, or work reliably across multiple sessions on a large project.

Harness engineering (2026) is the current frontier. It's the infrastructure layer that governs how the model operates. Not just what the model sees, but the constraints, verification loops, and deterministic guardrails that turn a hallucination-prone system into a reliable production tool.

Harness engineering is a subset of context engineering, everything the harness does ultimately shapes what ends up in the model's context window. A linter doesn't fix code directly. It produces an error message that enters the context, and the model fixes the code. A sandbox doesn't prevent bad logic. It surfaces errors that become context for the next attempt. The harness is infrastructure for managing context at scale.

Context rot

The harness engineer exists because of a hard empirical truth: context rot. Chroma's research across 18 frontier models proved that model performance degrades as input length increases, even when the context window isn't close to full. Every model tested got worse at every length increment. The reason is architectural transformer attention is quadratic, so as tokens accumulate, each one gets a thinner slice of the model's attention budget.

Dex Horthy of HumanLayer, drawing from over 100,000 developer sessions, identified the practical threshold: once you pass roughly 40% of the context window, you enter what he calls the "dumb zone." The agent's reasoning becomes systematically unreliable.

Research, plan, implement

The most effective way to use a coding agent isn't to ask for code immediately. Dex Horthy's framework from his YC Root Access talk lays out a three-phase workflow that consistently outperforms the naive approach.

Research comes first. Before any code changes, the agent analyzes the codebase and produces a compact research file, relevant filenames, line numbers, a system overview. This prevents the agent from flooding its own context with large, irrelevant data. The research file becomes the agent's map of the territory.

Plan comes second. The agent produces a detailed implementation plan: specific file changes, code snippets, and a testing strategy. A human reviews this plan — not the code itself. This is the critical insight. Reviewing a plan is fast. Reviewing thousands of lines of generated code is slow and error-prone. It also keeps the context window clean: it contains the approved strategy, not three failed attempts at a fix.

Implement comes last. The agent writes code against the approved plan. The key constraint: keep context utilization under 40%. When transitioning between sub-tasks, the harness intentionally compacts the history — keeping the progress summary and discarding the verbose logs. Automatic compaction is too blunt for this. The harness engineer designs the compaction strategy deliberately.

The hierarchy of effort is the most important takeaway: time invested in research and planning delivers exponentially greater returns than time spent on implementation. A single misunderstanding during research cascades into thousands of lines of wrong code.

The four systems of a production harness

Every serious production harness converges on four interlocking systems. Each one addresses a specific class of failure that prompting alone cannot prevent.

Deterministic constraints

Some decisions should never be left to the model's judgment. A harness engineer identifies these decisions and hardcodes them.

OpenAI takes this further with mechanical architecture enforcement. Their codebase enforces a strict dependency layering: Types → Config → Repo → Service → Runtime → UI. Each layer can only import from the layer to its left. This isn't documented as a suggestion in a README. It's enforced by custom linters and structural tests that run in CI. When an agent violates a boundary, the error message doesn't just say "violation" it explains why the boundary exists and provides specific instructions for how to fix it. The linter becomes the teacher.

The counterintuitive result: constraining what the agent can do makes it more productive, not less. When the solution space is infinite, the agent wastes tokens exploring dead ends. When clear boundaries exist, it converges faster on correct solutions.

Agentic security

An unsupervised agent with network access and a shell is a security incident waiting to happen. The harness acts as a whitelist proxy between the agent and the outside world.

This extends to tool access. Stripe built a centralized MCP server called "Toolshed" that hosts roughly 500 internal tools. But giving an agent 500 tools causes what practitioners call "token paralysis", the model spends so many tokens reasoning about which tool to use that it degrades at the actual task. The harness curates a subset of around 15 relevant tools per task. More tools aren't better. The right tools are better.

Sandboxed verification

The agent needs an environment where it can fail safely, learn from the failure, and try again, all without a human watching over its shoulder.

Google's MoMoA architecture adds adversarial verification on top of sandboxed execution. Every work phase pairs two agents with deliberately conflicting personas, a conservative developer focused on stability and a creative developer focused on novel solutions and prompts them to challenge each other's assumptions. A separate "Ask an Expert" tool provides independent review without access to the chat history that produced the work, which breaks the confirmation bias that comes from shared context. For a hallucinated snippet to reach the final output, it has to survive every independent checkpoint in the chain.

Telemetry and context snapshots

When an agent fails, logs alone aren't sufficient. The harness captures what might be called a context snapshot: a complete picture of exactly what was in the model's context window at the moment of failure. This lets the engineer replay the failure and fix the harness rather than just re-running the prompt and hoping for a better outcome.

Anthropic's approach embeds this pattern directly into their long-running agent architecture. Each coding session ends with a git commit (providing a revertible checkpoint) and a progress file update (providing a readable summary). If the next session discovers the codebase is broken, it can trace back through the git history, identify where things went wrong, and recover a working state without having to guess at what happened.

The bottom line

The mechanical side of programming is being delegated to the harness. This doesn't make the engineer obsolete. It shifts the job to systems architecture, environmental design, and failure mode analysis.

The harness engineer is a mechanic, not a poet. Every hour spent understanding how a system actually fits together now compounds across a fleet of autonomous workers rather than just your own output.

The harness is the lever. Build it well.

References and further reading

Chroma Research (2025). Context Rot: How Increasing Input Tokens Impacts LLM Performance. Technical Report

Stripe Engineering (2026). Minions: Stripe's One-Shot End-to-End Coding Agents. Stripe Dev Blog

Karpathy, A. (2025). Software 3.0. Technical Essay

OpenAI Engineering (2026). Harness Engineering: Leveraging Codex in an Agent-First World. OpenAI Blog

Anthropic Engineering (2025). Effective Harnesses for Long-Running Agents. Anthropic Blog

Google Labs (2026). MoMoA: Mixture of Mixture of Agents. labs.google/code

Fowler, M. (2026). Harness Engineering. martinfowler.com

HumanLayer (2026). Skill Issue: Harness Engineering for Coding Agents. HumanLayer Blog